ASO World Halloween Event 2025: Get Up to $800 in Extra Bonuses

Technical analysis of OpenAI's latest embedding models, GPT-4 Turbo updates, and API key configurations. Features actionable integration strategies for developers.

OpenAI has introduced a systematic revision to its artificial intelligence model infrastructure and application programming interfaces. This system update includes the deployment of advanced embedding models, a structural iteration of the GPT-4 Turbo advanced AI infrastructure, reinforced moderation protocols, and granular API usage management mechanisms.

Concurrently, the organisation has implemented a price reduction protocol for GPT-3.5 Turbo, facilitating greater computational accessibility for the developer community.

>>> OpenAI's official API and embedding model release notes

OpenAI is currently deploying two distinct embedding models: the highly efficient text-embedding-3-small and the high-capacity text-embedding-3-large. These models translate linguistic data into numerical sequencing, substantially optimising the mechanism by which machine learning algorithms execute operations such as multi-dimensional clustering and Retrieval-Augmented Generation (RAG).

Execution Strategy: Data scientists should immediately evaluate existing vector databases to determine migration feasibility, as transitioning to V3 models can significantly improve semantic retrieval accuracy in large-scale enterprise datasets.

The text-embedding-3-small model demonstrates marked performance superiority over its predecessor, whilst text-embedding-3-large establishes a new evaluative benchmark for OpenAI's embedding capabilities.

Cost metrics for the smaller model have been drastically reduced, enhancing viability for high-volume data processing tasks.

|

text-embedding-3-small

|

text-embedding-3-large

|

|

|

Price

|

$0.00002 / 1k tokens

|

$0.00013 / 1k tokens

|

|

MIRACL average

|

44.0 | 54.9 |

|

MTEB average

|

62.3 | 64.6 |

The updated GPT-3.5 Turbo architecture delivers improved computational economy alongside deterministic accuracy enhancements and specific bug resolutions relating to non-English linguistic operations.

Cost adjustments reflect a 50% decrease in input overheads ($0.0005 per 1k tokens) and a 25% decrease in output overheads ($0.0015 per 1k tokens).

Furthermore, the model provides strengthened precision in producing format-specific outputs (such as strict JSON generation) and rectifies encoding errors previously identified during non-English function execution.

Systems reliant on the static GPT-3.5 Turbo model alias will inherently migrate to this iteration, automatically acquiring the revised pricing schema.

The latest GPT-4 Turbo preview model validates a higher rate of exhaustiveness in task completion, directly addressing empirical data identifying previous systemic truncation.

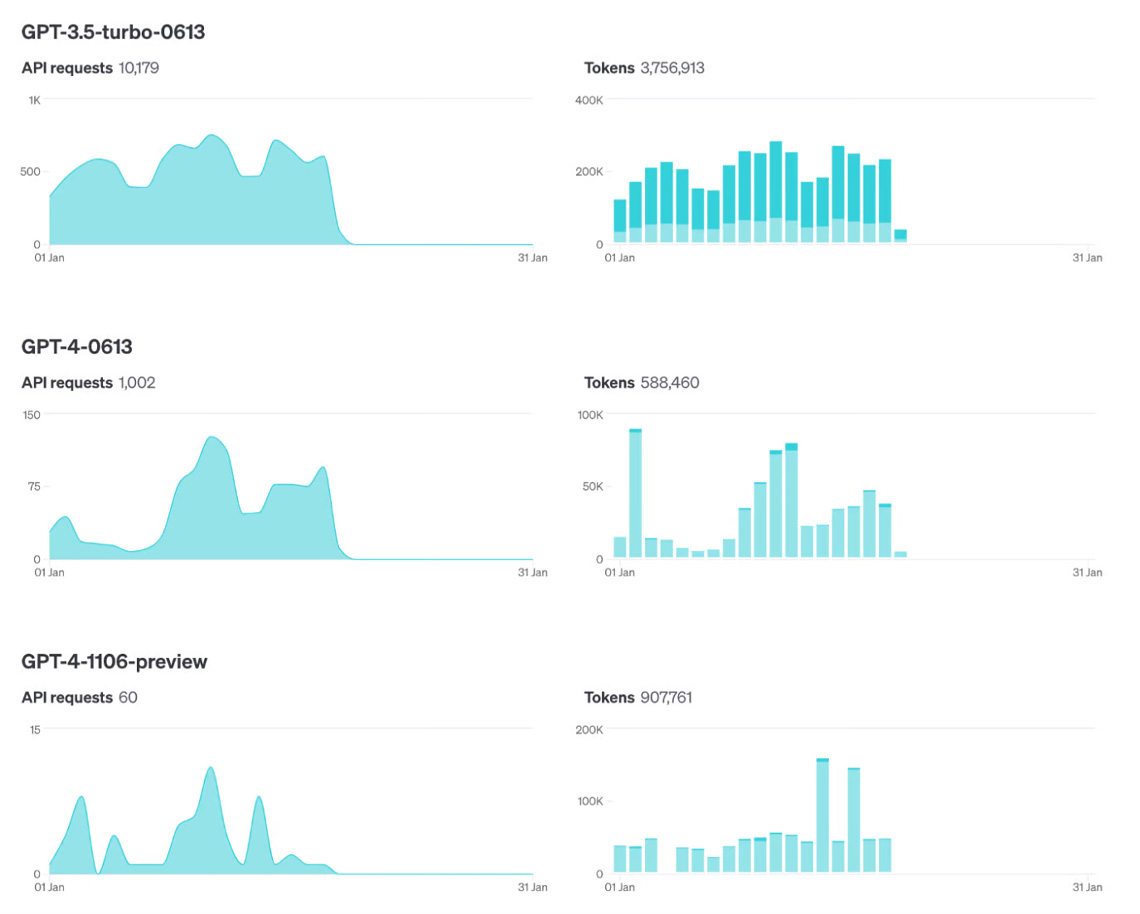

Telemetry indicates over 70% of GPT-4 API implementations have transitioned to GPT-4 Turbo. This shift is primarily driven by its modernised knowledge cut-off, expanded 128,000 token context window, and structural cost efficiencies.

The deployment of gpt-4-0125-preview refines logical task completion—particularly in complex code generation—and rectifies the "laziness" anomaly where legacy iterations abandoned long-form operations. Non-English UTF-8 processing anomalies have also been neutralised.

To facilitate integration, an automated alias (gpt-4-turbo-preview) has been engineered to route API calls dynamically to the most current Turbo preview release.

General availability of GPT-4 Turbo encompassing multi-modal visual processing is verified for imminent release.

>>> AI-driven app scaling and promotion strategies

The introduction of text-moderation-007 reinforces strict adherence to secure AI deployment frameworks. Utilised as the primary structural moderation filter, it provides system administrators with refined algorithmic parameters to categorically isolate and suppress harmful outputs.

Platform architecture has been modified to provision advanced telemetry and strict access control logic over API keys.

Administrators can now configure specific cryptographic parameters directly through the dashboard. This supports the assignment of read-only access states or endpoint-specific limitations based on the principle of least privilege.

Once telemetry is activated, the structural dashboard enables metric exportation segregated by individual API keys.

Execution Strategy: Engineering leads should immediately segment project API keys. Assign read-only keys for front-end analytics and reserve unrestricted, endpoint-focused keys exclusively for backend compilation. This fundamentally mitigates vulnerability risks across disparate teams or product deployments.

Strategic roadmaps detail further enhancements to API orchestration and the general availability launch of multi-modal vision models. Developers are instructed to maintain observation of technical release logs and integrate findings into their operational pipelines.

Click "Strategic App Promotion" to scale your mobile application and gaming platforms with professional integration via the ASO World ASO marketing and technical scaling index.

These architectural updates signify a critical maturation in large language model distribution. By mathematically reducing token latency, suppressing computational costs, and embedding rigorous API safeguards, institutional and independent developers gain necessary operational latitude.

The algorithmic refinements to vector embeddings specifically highlight the industry trajectory: raw generation is being actively superseded by nuanced contextual understanding and rapid semantic retrieval across massive enterprise datasets.

Get FREE Optimization Consultation

Let's Grow Your App & Get Massive Traffic!

All content, layout and frame code of all ASOWorld blog sections belong to the original content and technical team, all reproduction and references need to indicate the source and link in the obvious position, otherwise legal responsibility will be pursued.